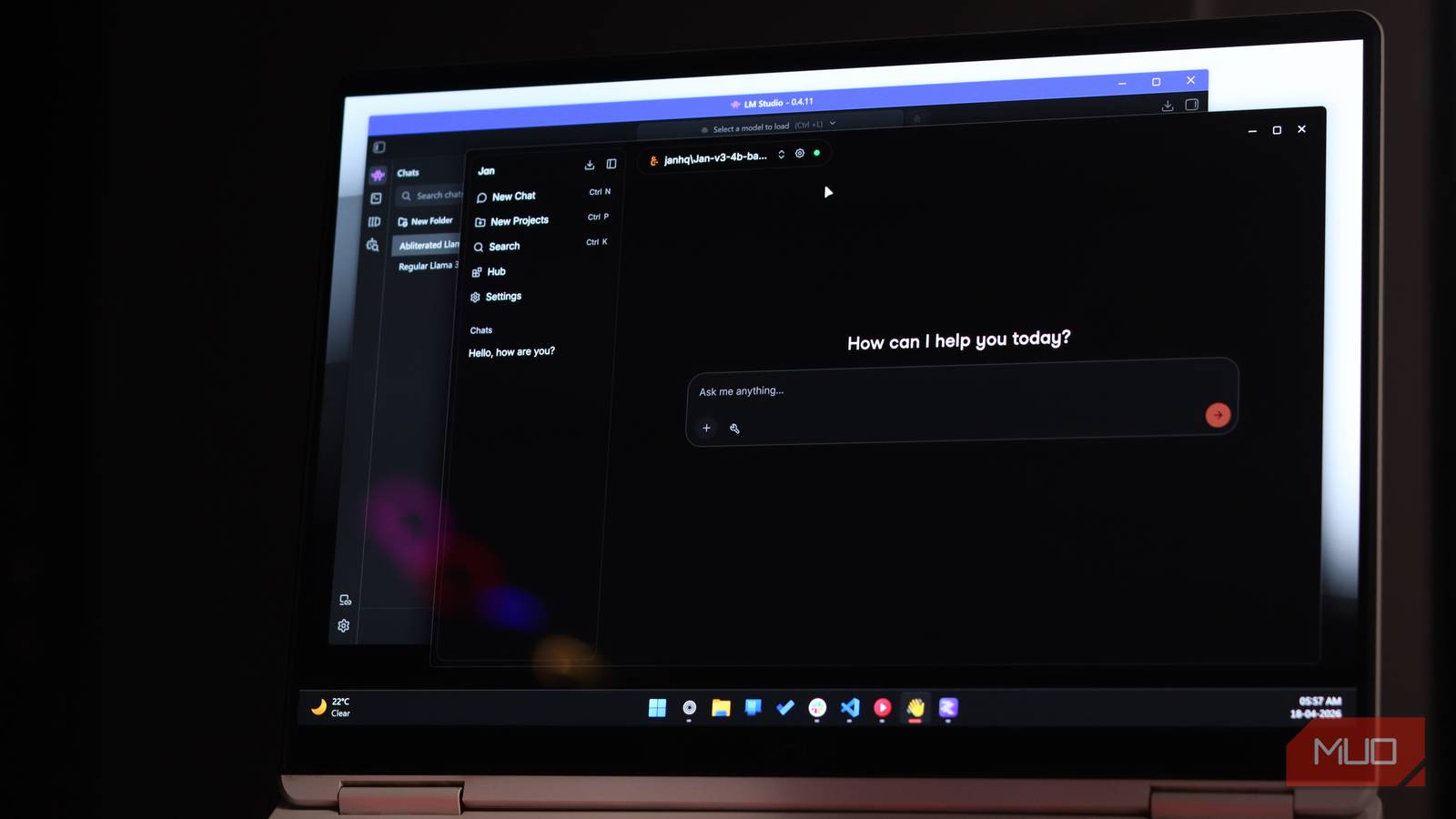

I have been really getting into running LLMs locally lately, and it has honestly become one of my newest hobbies. There are tons of cool things you can do once you get a local AI server set up.

But one thing I keep noticing is that people get stuck before they even get started. Choosing your first model is the part that trips everyone up. But once you understand what you’re looking at, it becomes really easy.

5 useful things I do with a local LLM on my phone

Privacy aside, a local LLM is just really convenient.

All the information you need is right in the model name

No, you don’t need to understand code

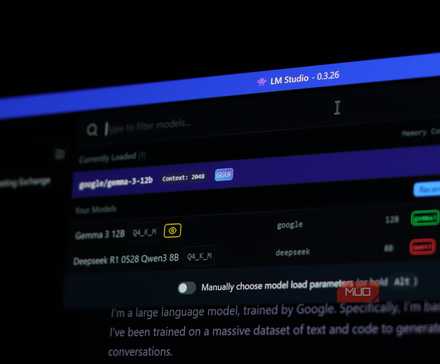

When you head over to Hugging Face and start browsing for models, you’ll notice that every single model name looks like a jumble of letters and numbers. For example, you might come across gemma-4-26B-A4B, which is definitely a mouthful.

The thing is, this name alone tells you a LOT about the model itself. The name is basically a spec sheet. Once you know how to read it, you’ll already know a huge amount about what you are looking at before you even click through to the model page.

When you look at different models, you will notice the same components showing up across almost every model name. Here is what each one actually means:

When you look at different models, you will notice the same components showing up across almost every model name. Here is what each one actually means:

- Family Name (Gemma, Qwen, Llama): Think of this as a brand name. It tells you who made the model and which generation it is. Gemma is made by Google, Qwen is Alibaba, and Llama is Meta.

- Total Parameters (26B): This is the full size of the model, measured in billions of parameters. Without getting too deep into what parameters actually are, just think of it as a rough measure of how much the model knows and how capable it is. Bigger number, smarter model, but also heavier to run.

- Activated Parameters (A4B): This one only shows up on certain models, and it is actually really important to understand. Even though this model has 30 billion parameters in total, only 3 billion are doing any actual work at a given time.

- Quantization Level (Q4_K_M, Q8_0, 4bit): You’ll not notice this on every model, but it’s still worth mentioning. Think of this as a compression setting. A lower number, like Q4, means the model file is smaller and runs faster, with a very minor quality trade-off that you will not notice in everyday use.

Once you get comfortable reading model names this way, you’re already 80% of the way there. You just need to figure out what you can run on your machine, and what you can expect from different models.

You just need to understand what your hardware can run

Memory is the key

To run an LLM locally, the most important thing is the amount of memory the model can use. If you are on an Apple Silicon Mac, your available memory is your total Unified Memory. If you are on a dedicated GPU, it is your VRAM.

The most basic thing to keep in mind is the parameter count. That number, in billions, is what determines how much memory a model needs to load. Taking a rough estimate, 8GB of memory gets you around 8B models, 16GB gets you around 13-16B, and 24GB opens up 30B and above. Along with that, two other things can majorly affect this.

The first is quantization. A Q4 model has been compressed quite aggressively, roughly halving the memory it needs. A Q8 model has lower compression, so it is larger but closer to the original. For most people, Q4 is completely fine; the quality difference in everyday use is barely noticeable.

I now use this offline AI assistant instead of cloud chatbots

Even with cloud-based chatbots, I’ll always use this offline AI assistant I found.

Similarly, MoE is also another thing to look at. When you look at, for example, A3B, it shows that the model will only activate 3B parameters during response, making it feel much faster.

Common misconception: A3B does not mean the model runs on 3GB of memory. You still need to load the entire model into memory; the activated parameters just mean it generates responses faster.

So take something like Qwen3.6-27B-4bit. It is a 27B model, so you would need around 27 gigs of memory to run it. But because it is 4-bit quantized, that drops to around 13-14GB. That means you can comfortably run it on something like a base Mac Mini.

Start small, you can always go bigger

Move your way up

There is no golden model. Every choice you make is a trade-off between speed and quality. A smaller model will respond faster but will occasionally fall short on complex tasks. A larger model will be more capable but slower. Neither is wrong; it just depends on what you are actually using it for, and the only real way to figure that out is to use it.

So just pick something that fits your hardware and run it for a few days. Try it for the things you actually want to do. Writing, coding, summarizing, whatever. You will figure out its limits pretty quickly, and those limits will tell you exactly what to look for next. Do not be afraid of that process; that is just how it works.

If you are still not sure where to even begin, I would suggest trying llmfit. It detects your machine specs and ranks models based on what your system can realistically handle, so instead of guessing, you just get a list of models that will actually run well on your machine. It is a much better starting point than reading benchmark threads about hardware that is not yours.

I’ll never pay for AI again

AI doesn’t have to cost you a dime—local models are fast, private, and finally worth switching to.

Experiment, experiment, experiment!

The local LLM space moves fast as well. A model that was impressive six months ago has probably been superseded by something smaller that runs better.

So there is genuinely no point agonizing over the perfect first pick. Just get something running, see what it can and cannot do, and go from there.

- OS

-

Windows, macOS, Linux

- Developer

-

Ollama

- Price model

-

Free, Open-source

A lightweight local runtime that lets you download and run large language models on your own machine with a single command.