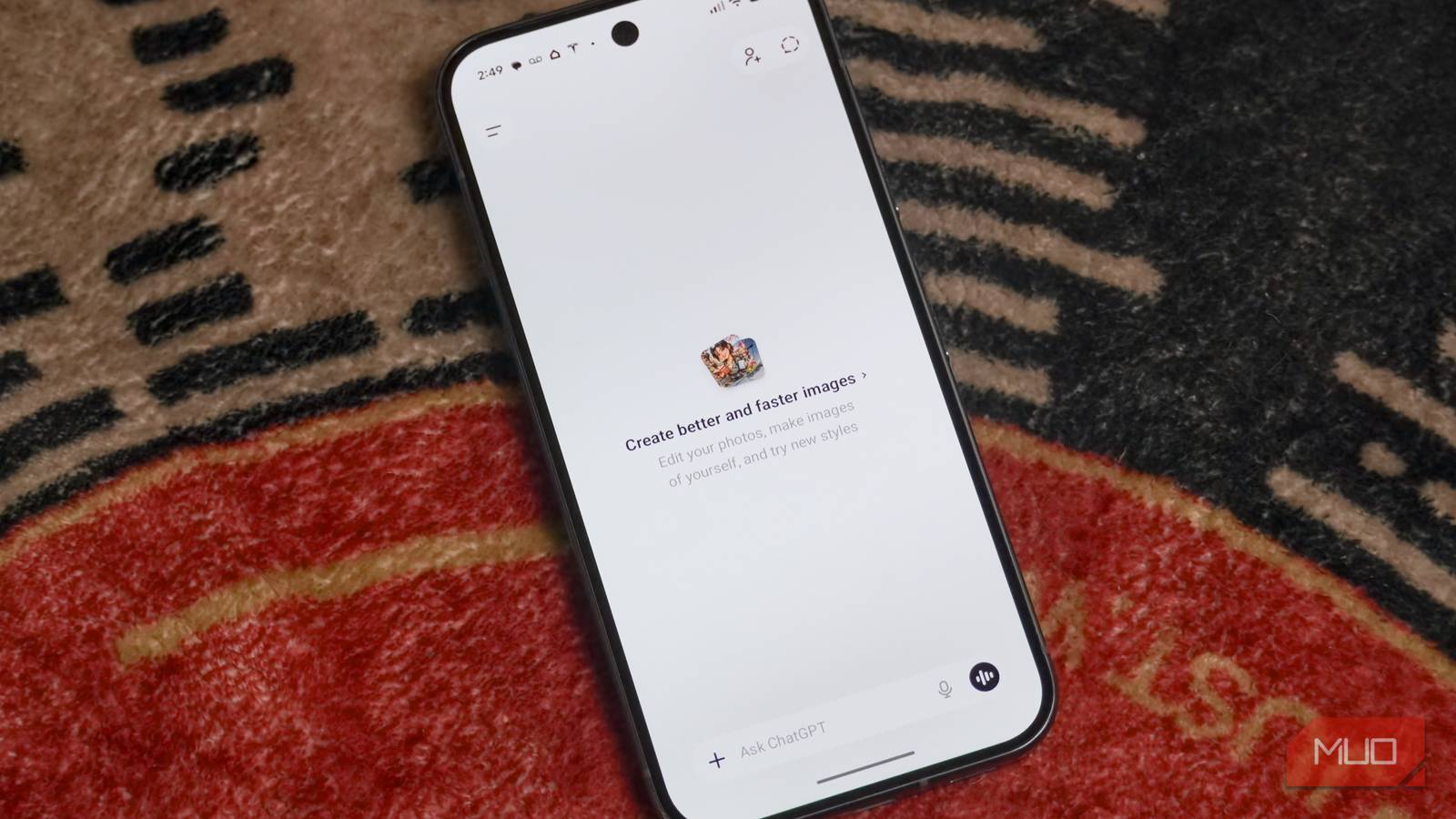

There’s buzz around “Cancel ChatGPT” — a movement aimed at convincing people to cancel their OpenAI subscriptions, or to stop using ChatGPT altogether. The effort, documented by users on the r/ChatGPT Reddit, comes as OpenAI formed a deal with the U.S. Department of Defense (unofficially called the Department of War) to deploy AI models in classified government networks. The controversy isn’t about the deal itself, but how it came to be. Anthropic previously contracted with the Department of Defense, but the U.S. government took exception to the company’s safeguards against the use of AI for mass domestic surveillance or fully autonomous weapons systems.

Days of drama ensued, and U.S. President Donald Trump’s administration moved to designate Anthropic a supply chain risk, forcing government agencies and contractors to start phasing out use of the company’s models. Shortly after, OpenAI formed its own deal with the Department of Defense, leading many to wonder whether it accepted the government’s terms of permitting AI use for “all lawful purposes.” OpenAI and its CEO, Sam Altman, have since tried to control the damage, claiming to amend its U.S. government agreement to add clear restrictions against using AI for these purposes.

We might never know the exact terms of OpenAI’s deal with the U.S. government, but the optics are clear. It sure looks like the company swooped in when Anthropic was putting its ethical principles first to acquire a valuable government contract. That’s as good of a reason as any to leave ChatGPT behind, and I have no problem doing just that.

ChatGPT has tons of features in 2026 — here are 3 that you probably missed

From group chats to an AI-powered shopping mode, there’s a lot of ChatGPT features most people never use.

Everyone is getting on the “Cancel ChatGPT” bandwagon

There are some red flags in OpenAI’s deal with the US government

Anthropic, the company behind Claude, was the first frontier AI corporation to deploy its models in classified U.S. government networks. Theoretically, that gave it a leg up over the competition, but things became testy when the U.S. government demanded all guardrails be removed. The administration wants all AI contracts to permit the systems to be used for “all lawful purposes,” and requested Anthropic eliminate safeguards against using Claude for mass domestic surveillance and fully autonomous weapons systems. Here’s how Anthropic explained the situation in a blog post:

Anthropic understands that the Department of War, not private companies, makes military decisions. We have never raised objections to particular military operations nor attempted to limit use of our technology in an ad hoc manner.

However, in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values. Some uses are also simply outside the bounds of what today’s technology can safely and reliably do.

The U.S. government, in response, threatened to take the unprecedented step of labeling a domestic company as a “supply chain risk.” In an equal but opposite move, it threatened to use the Defense Production Act to force Anthropic to remove its hard guardrails. Anthropic’s stance became clear: “Regardless, these threats do not change our position: we cannot in good conscience accede to their request.”

That made it all the more shocking when OpenAI announced a deal with the Department of Defense just two days later, sparking a flurry of questions. Did the company behind ChatGPT give in to the government’s demands? The answer appears to be, yes.

OpenAI and Altman are on a damage control mission right now, and they’re using the right language to make people think they included the same protections Anthropic demanded. This doesn’t make sense from a logical perspective — why would the U.S. government publicly break up with Anthropic, already deeply ingrained in U.S. government systems, if it wasn’t serious about removing these guardrails? It also appears to be factually untrue, as government officials, including Senior Official Jeremy Lewin, confirmed that OpenAI’s contract “flows from the touchstone of ‘all lawful use.'”

Altman’s public statements don’t exactly align with what is reportedly going on behind the scenes — CNBC reports that the OpenAI CEO told its employees that the company doesn’t “get to make operational decisions” about how the Department of Defense uses its AI models.

It’s easy to say goodbye when the competition is good

Is ChatGPT really better than Claude or Gemini these days?

Regardless of how you feel about the U.S. government’s use of AI for defense, OpenAI’s handling of this situation should give you pause. It’s the latest in a long line of ethical concerns to come out of the company. Previously, OpenAI disbanded its “mission alignment team,” according to a report from Platformer. Even before that, OpenAI spun a non-profit company into a for-profit entity, blurring the lines between its original mission of safely developing artificial general intelligence (AGI) for all and its new goal of turning a profit. With a technology as controversial as AI at play, it’s hard to keep supporting a company with values that are clearly questionable.

The “Cancel ChatGPT” or “QuitGPT” trends are pushing users to switch to Anthropic’s Claude and other competitors, and it’s easy to see why. Frankly, there is little reason to keep using OpenAI products, and the company has been behind the curve for some time. Take a look at LMArena — a leading AI model benchmark — and you’ll see that the first OpenAI model ranks sixth on text and coding benchmarks. It’s consistently losing to models developed by Anthropic, Google, and xAI. For the vision benchmark, OpenAI ranks fourth. In the document benchmark, OpenAI ranks tenth.

OpenAI’s ChatGPT Image performs the best out of its models, earning the top spot on LMArena’s image editing benchmark. However, it’s just 10 points ahead of Gemini 3 Pro Image. Put simply, if you want the latest and greatest AI models, you should be looking beyond ChatGPT. Anthropic, Google, and xAI have not only caught up, but also surpassed OpenAI models in recent months and years. Now is the best time to ditch ChatGPT for good because the competitors are more ethically sound and perform better.

6 Reasons I Use Claude Instead of ChatGPT

ChatGPT is great; don’t get me wrong. But Claude is so much better.

I’m all-in on Gemini, and that isn’t changing anytime soon

Pick a service with guardrails are strong and that you can trust

Let’s make something clear: there isn’t a single AI company with perfect ethics. Anthropic itself ditched its industry-leading safety pledge last month, amending its commitment to stop training AI models if they couldn’t be proven safe. Now, Responsible Scaling Policy 3.0 only states Anthropic will pause development if it has a lead on its competitors. Similarly, Google dropped a provision of its AI guidelines that prevented uses that were “likely to cause harm” in early 2025. Microsoft, xAI, and Amazon have weak protections against mass domestic surveillance or fully autonomous weapons powered by AI.

With that being said, there’s little reason to keep using ChatGPT when its competitors are easier to trust — and are making better AI models. I ditched ChatGPT for Gemini long ago, mostly for performance reasons, as the best Google AI models consistently beat the best OpenAI models. I’m glad I did, because OpenAI’s deal with the U.S. government is surely cause for concern. You want to use and support products from an AI company that aligns with your values. If you don’t trust OpenAI or Google, you shouldn’t use their AI products. The same goes for Anthropic, Microsoft, xAI, and Amazon, or any other company for that matter.

I can’t tell you which AI company to trust — that’s your decision to make. I will warn you that there are plenty of reasons to be skeptical of OpenAI’s values and guardrails these days, and recommend you try alternatives like Claude or Gemini. They might just be better than ChatGPT for your needs, too.